Photonic computing startup Lightmatter has raised $400 million to blow certainly one of fashionable datacenters’ bottlenecks extensive open. The corporate’s optical interconnect layer permits tons of of GPUs to work synchronously, streamlining the pricey and complicated job of coaching and working AI fashions.

The expansion of AI and its correspondingly immense compute necessities have supercharged the datacenter trade, however it’s not so simple as plugging in one other thousand GPUs. As excessive efficiency computing consultants have recognized for years, it doesn’t matter how briskly every node of your supercomputer is that if these nodes are idle half the time ready for knowledge to return in.

The interconnect layer or layers are actually what flip racks of CPUs and GPUs into successfully one large machine — so it follows that the sooner the interconnect, the sooner the datacenter. And it’s trying like Lightmatter builds the quickest interconnect layer by an extended shot, through the use of the photonic chips it’s been creating since 2018.

“Hyperscalers know if they need a pc with 1,000,000 nodes, they will’t do it with Cisco switches. As soon as you permit the rack, you go from excessive density interconnect to principally a cup on a robust,” Nick Harris, CEO and founding father of the corporate, informed TechCrunch. (You’ll be able to see a brief discuss he gave summarizing this subject right here.)

The cutting-edge, he stated, is NVLink and significantly the NVL72 platform, which places 72 Nvidia Blackwell items wired collectively in a rack, able to a most of 1.4 exaFLOPs at FP4 precision. However no rack is an island, and all that compute must be squeezed out by 7 terabits of “scale up” networking. Seems like rather a lot, and it’s, however the incapability to community these items sooner to one another and to different racks is likely one of the fundamental boundaries to bettering efficiency.

“For 1,000,000 GPUs, you want a number of layers of switches. and that provides an enormous latency burden,” stated Harris. “You must go from electrical to optical to electrical to optical… the quantity of energy you utilize and the period of time you wait is big. And it will get dramatically worse in greater clusters.”

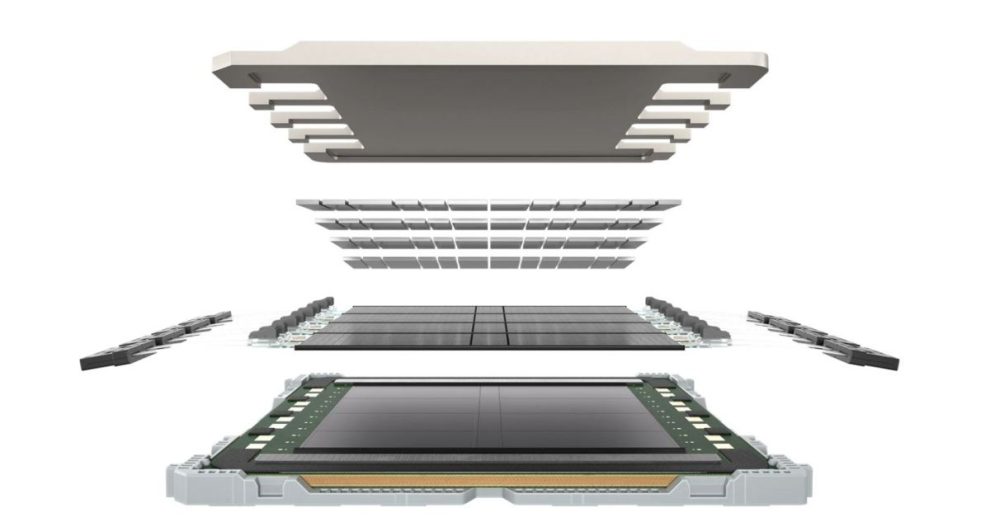

So what’s Lightmatter bringing to the desk? Fiber. Tons and plenty of fiber, routed by a purely optical interface. With as much as 1.6 terabits per fiber (utilizing a number of colours), and as much as 256 fibers per chip… nicely, let’s simply say that 72 GPUs at 7 terabits begins to sound positively quaint.

“Photonics is coming manner sooner than folks thought — folks have been struggling to get it working for years, however we’re there,” stated Harris. “After seven years of completely murderous grind,” he added.

The photonic interconnect at the moment obtainable from Lightmatter does 30 terabits, whereas the on-rack optical wiring is able to letting 1,024 GPUs work synchronously in their very own specifically designed racks. In case you’re questioning, the 2 numbers don’t improve by comparable components as a result of lots of what would should be networked to a different rack might be completed on-rack in a thousand-GPU cluster. (And anyway, 100 terabit is on its manner.)

The marketplace for that is big, Harris identified, with each main datacenter firm from Microsoft to Amazon to newer entrants like xAI and OpenAI displaying an limitless urge for food for compute. “They’re linking collectively buildings! I’m wondering how lengthy they will stick with it,” he stated.

Many of those hyperscalers are already clients, although Harris wouldn’t identify any. “Consider Lightmatter a little bit like a foundry, like TSMC,” he stated. “We don’t choose favorites or connect our identify to different folks’s manufacturers. We offer a roadmap and a platform for them — simply serving to develop the pie.”

However, he added coyly, “you don’t quadruple your valuation with out leveraging this tech,” maybe an allusion to OpenAI’s latest funding spherical valuing the corporate at $157 billion, however the comment might simply as simply be about his personal firm.

This $400 million D spherical values it at $4.4 billion, the same a number of of its mid-2023 valuation that “makes us by far the biggest photonics firm. In order that’s cool!” stated Harris. The spherical was led by T. Rowe Value Associates, with participation from current buyers Constancy Administration and Analysis Firm and GV.

What’s subsequent? Along with interconnect, the corporate is creating new substrates for chips in order that they will carry out much more intimate, if you’ll, networking duties utilizing mild.

Harris speculated that, aside from interconnect, energy per chip goes to be the massive differentiator going ahead. “In ten years you’ll have wafer-scale chips from all people — there’s simply no different manner to enhance the efficiency per chip,” he stated. Cerebras is after all already engaged on this, although whether or not they can seize the true worth of that advance at this stage of the know-how is an open query.

However for Harris, seeing the chip trade developing towards a wall, he plans to be prepared and ready with the following step. “Ten years from now, interconnect is Moore’s Legislation,” he stated.

Add Comment